Why We Replaced Reward Shaping with Free Energy

Architecture Deep Dive · March 22, 2026 · MH-FLOCKE Level 15 v0.4.x

Every reinforcement learning tutorial starts the same way: define a reward function. Want the robot to walk? Reward forward velocity. Want it to reach a target? Reward proximity. Want it to stay upright? Penalize falling.

It works. PPO, SAC, and TD3 can solve locomotion tasks in hours. But there’s a problem that becomes obvious the moment you try to build something that actually behaves like an animal: reward functions are lies we tell the optimizer.

MH-FLOCKE doesn’t use reward shaping. It uses Free Energy — a framework from computational neuroscience that turns prediction errors into action. Here’s why that matters, and what it took to make it work.

The Problem with Rewards

A reward function encodes what the designer wants, not what the agent understands. When you write reward = forward_velocity * 0.5 - torque_penalty * 0.01 + alive_bonus * 1.0, you’re injecting your knowledge of physics, biomechanics, and task structure into a scalar signal. The agent never learns why moving forward is good. It learns that a particular number goes up when certain joint angles coincide with certain body velocities.

This creates three specific problems:

Reward hacking. The agent finds ways to maximize the number that have nothing to do with the intended behavior. A walking robot that discovers it can get alive_bonus by vibrating in place. A ball-chasing agent that orbits the ball at exactly the distance where reward is maximized without ever touching it.

Brittle transfer. Change the terrain, the body, or the task even slightly, and the carefully tuned reward weights collapse. A reward function tuned for flat ground produces bizarre gaits on slopes because the relative importance of balance vs. speed shifts — but the weights don’t.

No intrinsic motivation. Turn off the reward, and the agent stops. It has no reason to explore, no curiosity, no drive. In biological systems, animals explore even without external reward because the nervous system is fundamentally organized around reducing prediction error — not maximizing an external signal.

Free Energy: Prediction Error as the Universal Currency

The Free Energy Principle, formulated by Karl Friston, proposes that biological systems minimize the difference between what they predict and what they observe. This isn’t a reward — it’s an error signal. The organism builds a generative model of its world and acts to make that model’s predictions come true.

In MH-FLOCKE, this translates to a concrete mechanism. The system maintains predictions about its sensory states — joint angles, body orientation, distance to objects. When reality deviates from prediction, that deviation becomes the prediction error (PE). The system then has two options: update its model (perception) or act to change the world (action).

The key insight: you don’t need to tell the system what’s good. You need to tell it what to expect. If the system expects to be near the ball, being far from the ball creates prediction error. The system will act to reduce that error — not because it’s been rewarded for approaching, but because the discrepancy between expectation and reality is aversive at a fundamental computational level.

Implementation: Task-Specific Prediction Error

The abstract principle needed concrete engineering. Here’s how Free Energy works in MH-FLOCKE’s code.

The brain computes a Task-Specific Prediction Error (TPE) every simulation tick:

TPE = (ball_distance - expected_distance) / normalization_factor

This TPE feeds into three systems simultaneously:

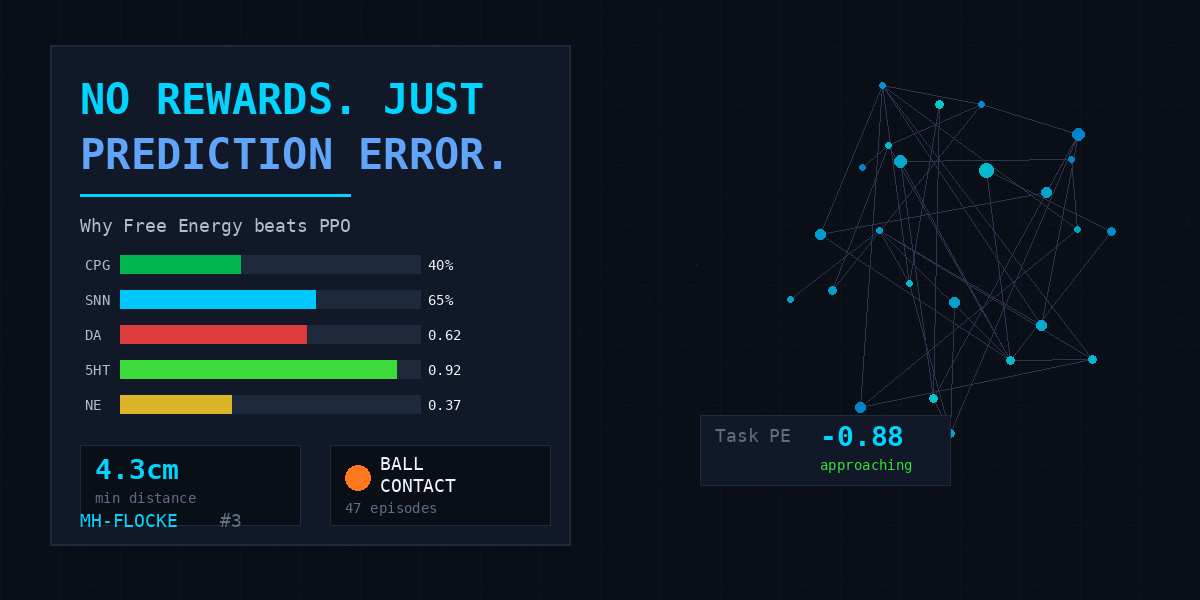

1. The SNN learning rule. R-STDP modulates synaptic plasticity based on a combination of reward and prediction error: modulation = 0.1 × reward + 0.9 × (−PE). When the dog approaches the ball, PE decreases, the negative of that decrease is positive, and synapses that contributed to the approach get strengthened. The 90/10 split means prediction error dominates — the system learns primarily from its own internal error signal, not from external reward.

2. The Vision Boost. When TPE exceeds a threshold, the last 16 input neurons — carrying environmental sensory information — get amplified proportional to the error magnitude. This is biological attention: unexpected stimuli become more salient. The dog literally pays more attention to the ball when its predictions about ball distance are wrong.

3. Neuromodulation. TPE drives dopamine release in the simulated neuromodulatory system. High positive PE (far from expected position) triggers exploration via norepinephrine. Decreasing PE triggers dopamine, reinforcing the current behavioral strategy. This creates a natural explore-exploit balance without epsilon-greedy or entropy regularization.

What We Lost (and What We Gained)

Free Energy is not free. Compared to PPO with a well-tuned reward function, here’s what changed:

Lost: Speed of convergence. PPO can solve ball-approach in 50k steps with a dense reward. MH-FLOCKE needs 100k steps with the curriculum. The prediction error gradient is weaker than a hand-designed reward — the signal-to-noise ratio is lower because the system has to discover the relevance of its own error signals.

Lost: Simplicity. A reward function is 5 lines of code. The Free Energy implementation spans the SNN controller, the vision boost module, the neuromodulatory system, and the R-STDP learning rule. It’s distributed across the architecture, not centralized in one function.

Gained: Robustness. The 10-seed ablation study showed that MH-FLOCKE’s variance across seeds is dramatically lower than PPO. When it works, it works consistently — because the learning signal comes from internal prediction dynamics, not from the accident of which random seed produces a favorable initial exploration trajectory.

Gained: Emergent behavior. The dog developed behavioral sequences — sniff → walk → trot → chase → alert — that were never programmed and never rewarded. They emerged because the prediction error landscape naturally creates behavioral attractors. When the ball is far, prediction error is high, driving fast locomotion. When close, PE drops, and the gait naturally slows. The transitions aren’t state-machine logic — they’re the dynamics of a system minimizing its own surprise.

Gained: Transfer potential. The same Free Energy architecture that drives ball approach also drives obstacle avoidance, terrain adaptation, and righting after falls. Change the prediction (expect flat ground → encounter a slope), and the system adapts — not because we wrote a slope-reward, but because the prediction error automatically captures the relevant discrepancy.

The Honest Result

Our ablation study produced one genuinely negative finding: motivational drives (hunger, curiosity, social) don’t significantly improve locomotion quality. Configuration B (SNN + Cerebellum, no drives) performs identically to Configuration C (SNN + Cerebellum + drives). The drives affect navigation — which direction the dog goes — but not how well it walks.

This is a real limitation. Free Energy as implemented in MH-FLOCKE is primarily a navigation framework, not a locomotion framework. The actual walking comes from CPGs and the cerebellar forward model. Free Energy tells the dog where to go, not how to move its legs.

In biological systems, these aren’t separate — the motivation to move and the mechanics of movement are deeply intertwined through spinal-cortical loops. MH-FLOCKE’s current architecture treats them as modular, which is both its engineering strength and its biological weakness.

What’s Next

The next step is closing the loop: letting Free Energy modulate not just navigation but gait selection. When prediction error is high (ball is far, terrain is rough), the system should shift to a more cautious gait. When PE is low (ball is close, ground is flat), it should accelerate. The CPG already supports multiple gaits — the missing piece is using prediction error to select between them.

But the core insight stands: you don’t need to tell a system what’s good. You need to give it the ability to predict, and the drive to minimize the gap between prediction and reality. Everything else — approach, avoidance, exploration, caution — emerges from the dynamics of a system that hates being surprised.

MH-FLOCKE is an independent research project by Marc Hesse in Potsdam, Germany. Read the full technical details in our research paper or watch the latest results on YouTube.